What it is, why it matters beyond the developer community, and where most people are leaving results on the table.

If you have spent any time using AI tools in the last couple of years, you have probably noticed that the quality of what you get back varies enormously. Sometimes the output is exactly what you needed. Other times it is generic, off-target, or structured in a way that is not useful at all. The difference, more often than not, comes down to how you asked.

That is what prompt engineering is, at its core. It is the practice of designing inputs to AI systems to get outputs that are reliably useful. It sounds simple enough, and in some ways it is. But there is more depth to it than the name suggests, and it is becoming one of the more practically valuable skills across a wide range of roles not just engineering.

A prompt is any input you give to a language model. In the simplest case, it is a question or an instruction. But in practice, a prompt is a design artefact. It can include context about who you are and what you are doing, examples of the output format you want, constraints on what the model should or should not do, and a clear statement of the task itself.

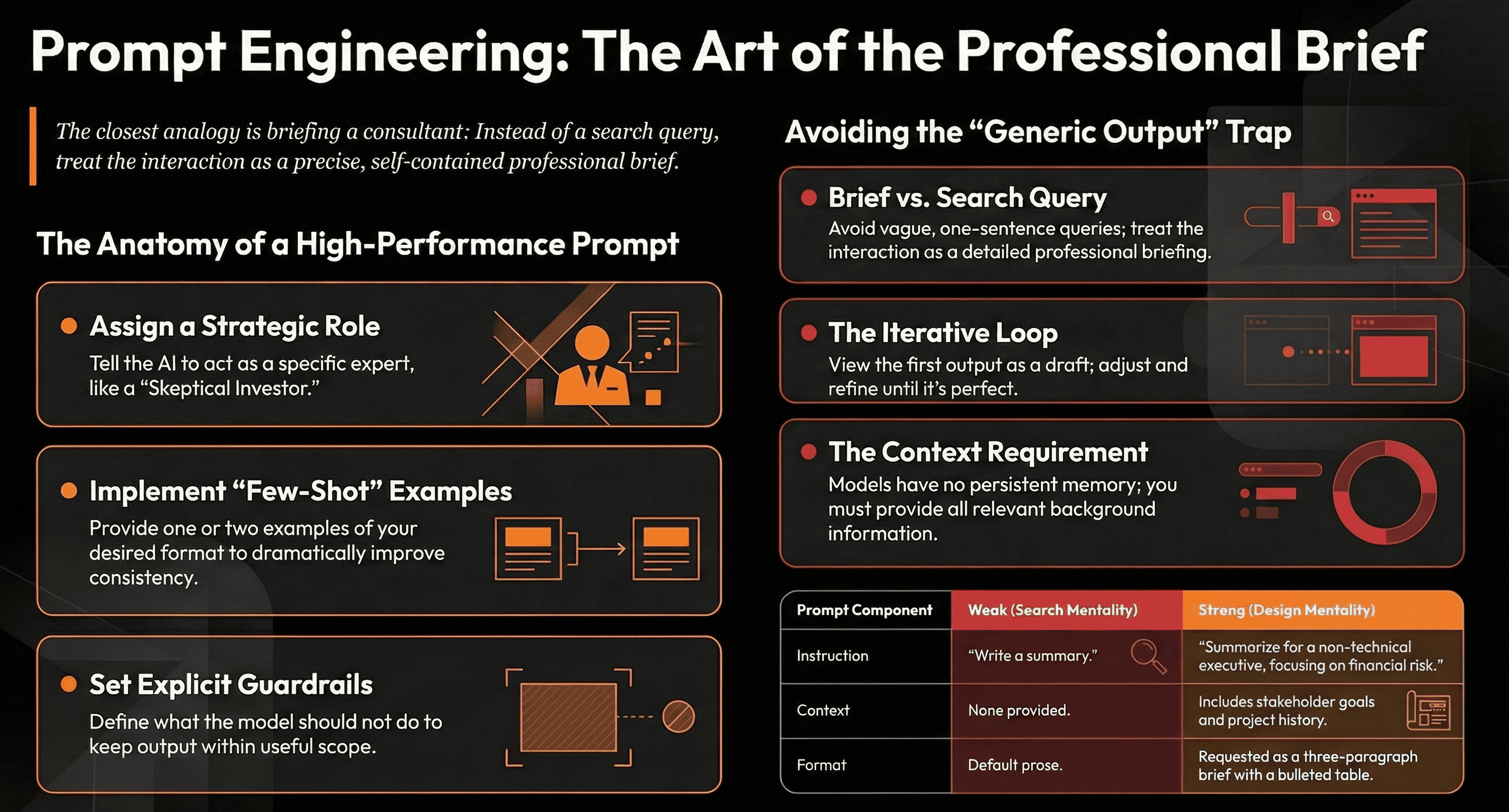

Language models do not have persistent memory between conversations, and they have no inherent understanding of your specific situation. Everything the model knows about your context in a given interaction is what you put in the prompt. That framing changes how you should think about the exercise. You are not talking to someone who already knows you. You are writing a very precise, self-contained brief.

The closest analogy is briefing a consultant on their first day. They are highly capable, but they know nothing about your organisation, your constraints, or your preferences. The quality of the work they produce is directly proportional to the quality of the brief you give them.

Prompt engineering started as a technical discipline. Early on, it was primarily about finding the exact phrasing that would get a model to perform a specific task reliably a concern for researchers and ML engineers. That is still a part of it. But as AI tools have become embedded in everyday workflows, the skill has become relevant to anyone who works with these systems regularly.

A product manager writing requirements that an AI tool will help research and structure. A consultant using Claude or ChatGPT to synthesise a set of documents. A marketing lead generating copy variants at scale. All of these use cases involve prompt design, even if nobody is calling it that. The people who get consistently better outputs are not necessarily more technically fluent they are more deliberate about how they communicate with the model.

In organisations that are seriously integrating AI into their operations, this skill starts to have compounding value. A team that knows how to prompt well gets more leverage from the same tools than a team that does not. The gap is not about which model you are using.

There is no single correct way to write a prompt, and what works well varies by task and model. But there are a few principles that consistently improve results.

Be specific about the task. Vague instructions produce vague outputs. The more precisely you define what you want; the format, the length, the angle, the audience, the closer the output will be to what you actually need. 'Write a summary' is a weak prompt. 'Write a three-paragraph summary of this document, written for a non-technical executive, focusing on the financial implications' is considerably better.

Give the model a role or perspective. Language models respond well to being told who they are in the context of the task. Asking a model to respond as an experienced software architect, or a senior editor, or a sceptical investor, shifts the lens through which it generates output in ways that are often genuinely useful.

Provide examples. If you have a clear sense of what good output looks like, show it. One or two examples of the format or style you want, what is often called few-shot prompting dramatically improves consistency. This is especially useful for structured outputs like tables, reports, or formatted lists.

Set constraints explicitly. If there are things the output should not do or should not include, say so. Models will not infer your constraints from silence. If you do not want the model to speculate, tell it. If you need it to cite its reasoning, ask for that. Constraints are not limitations on the model they are guardrails that keep the output within the scope of what is actually useful.

One useful test: could someone else read your prompt, with no prior context, and know exactly what output you are looking for? If not, the prompt is probably underspecified.

The most common issue is treating a prompt like a search query. People type a few words, get a mediocre result, assume the tool is not very good, and move on. In most cases, the tool would have performed significantly better with a more complete brief.

A related issue is not iterating. Prompt engineering is, in practice, a loop. You write a prompt, you evaluate the output, you adjust the prompt based on what was off, and you try again. Treating the first output as final unless it happens to be exactly right is leaving most of the value on the table.

Another common mistake is prompting without providing relevant context. If you want the model to help you draft a stakeholder update, and you give it no information about who the stakeholders are, what they care about, or what has happened since the last update, the output will be generic by necessity. The model cannot read your mind. Context is not optional, it is the input that makes personalised, relevant output possible.

Finally, people often neglect to specify the output format. A model will choose a structure if you do not give it one, and its default choices may not match how you intend to use the output. If you need a table, ask for a table. If you need plain prose with no bullet points, say so. Format is part of the brief.

For teams building products or workflows on top of AI models, prompt engineering scales up from an individual skill to a systems-level concern. The prompts embedded in your application, the system prompt that defines how your AI feature behaves, the instructions passed to an agent, the templates your automation uses are all design decisions with real consequences for quality and consistency.

At that level, prompt design sits alongside software design as something that needs to be deliberately maintained and improved. A poorly designed system prompt is a bug. An under-specified instruction set in an agent workflow is a reliability risk. Treating prompts as afterthoughts, rather than as core design artefacts, leads to AI integrations that underperform and are difficult to debug.

The teams that build the best AI-powered features are not necessarily the ones with access to the best models. They are the ones that take prompt design seriously as an engineering discipline.

If you are new to this, the fastest way to improve is to start paying attention to what changes when you change the prompt. Take a task you already use AI for, and run it three different ways: once with your usual approach, once with a more detailed brief, and once with an example of the output you want included. Compare the results. The differences will be instructive.

Prompt engineering is not a complex discipline to get started with. The fundamentals are learnable in an afternoon. What makes it valuable over time is the habit of being deliberate of treating the prompt as a design decision rather than an incidental input.

If you are integrating AI into your product or operations and want to think through the design decisions involved, we are happy to have that conversation. Feel free to reach out.