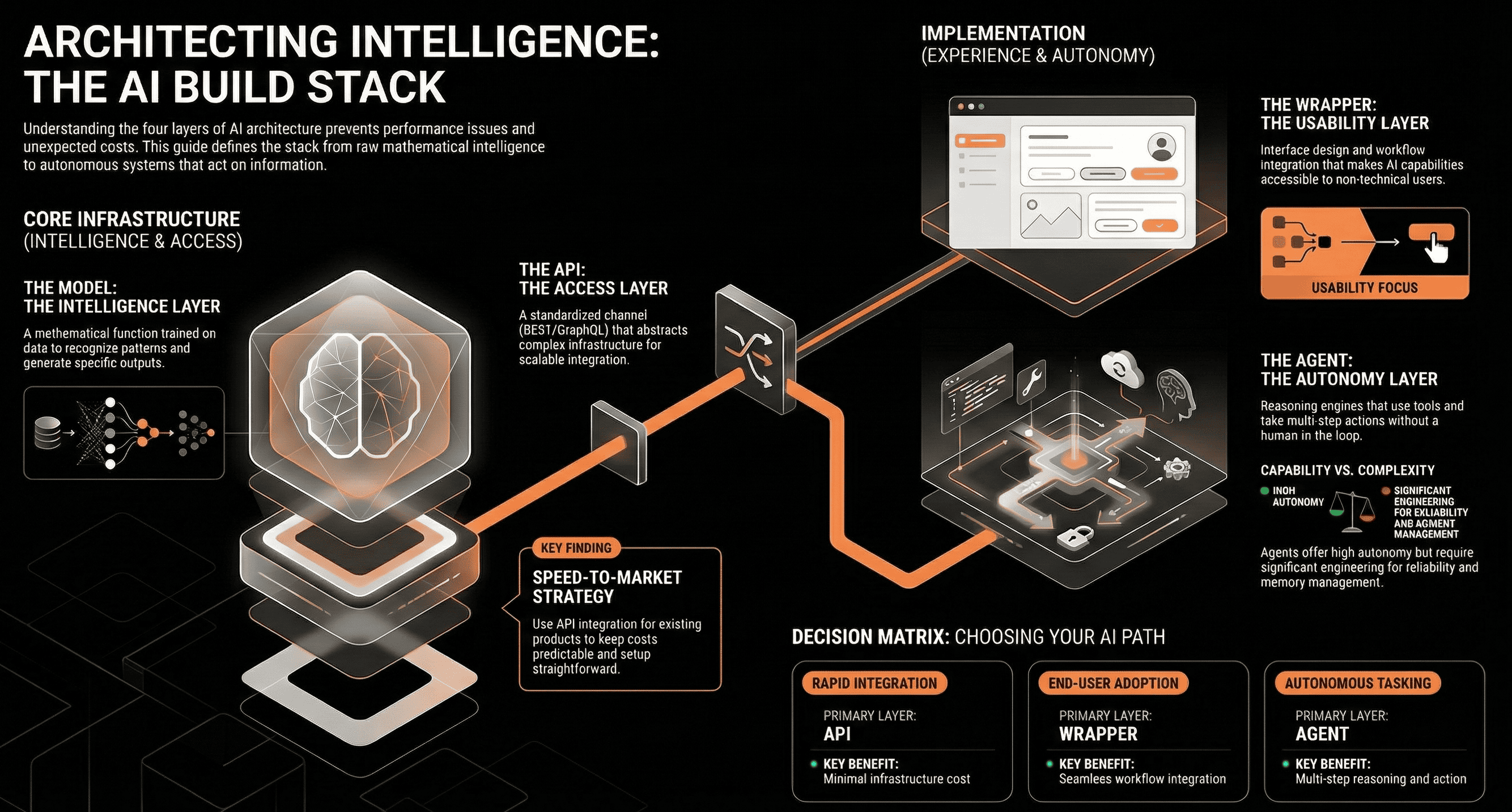

TL;DR: AI "models" are the trained algorithms that perform tasks. They're often accessed via "APIs," which can be further packaged by "product wrappers" into user-friendly applications, sometimes orchestrating "agents" for autonomous actions. Understanding these is key to smart AI builds. Define "model" in AI context. Introduce the landscape of AI components: models, APIs, product wrappers, and agents, explaining why differentiation is critical.

If you've spent any time close to an AI project, you've heard the terms: model, API, agent, wrapper. They get used interchangeably, loosely, sometimes incorrectly. That imprecision is fine in casual conversation, but when you're making architectural decisions, it starts to cost you. The wrong choice at the design stage shows up later as performance problems, unexpected costs, or systems that are painful to maintain.

This article breaks down what each of these components actually is, how they relate to each other, and how understanding the distinction leads to better decisions.

An AI model is, at its most basic, a mathematical function. It takes an input and produces an output. What makes it interesting is how that function was built: by exposing an algorithm to large amounts of data and adjusting its internal parameters through a training process until its outputs become reliably useful.

There are several broad categories of models in use today. Large language models (LLMs) like GPT-4 and Claude process and generate text. Computer vision models interpret images and video. Predictive models analyse historical data to forecast outcomes. Each type is trained on different data and optimised for a different kind of task.

Models are not static. They evolve through continued training, fine-tuning on domain-specific data, and feedback loops. GPT-4 and AlphaFold, DeepMind's model for predicting protein structures are good examples of how far this can go when a model is trained specifically for a well-defined problem. AlphaFold didn't just perform well on a benchmark. It changed how researchers approach drug discovery.

Most organisations working with AI don't train their own models. They access models built and maintained by others, and they do this through an API, an Application Programming Interface. In the AI context, an API is a defined channel through which your application can send a request to a model and receive a response.

The OpenAI API lets you send a prompt and receive a generated text completion. Google Cloud's Vision API lets you send an image and receive structured information about what's in it. The interface abstracts away everything happening underneath the infrastructure, the inference compute, the model weights and gives you a clean, standardised way to integrate AI capability into your own system.

REST and GraphQL are the two most common architectural patterns you'll encounter. REST is simpler and works well for most use cases. GraphQL gives clients more control over what data they request, which matters when you're working with more complex data structures or need to minimise unnecessary data transfer.

APIs are what make AI scalable at the product level. Without them, every team that wanted to use a language model would need to operate one themselves. That's rarely practical.

An API gives you access to a model. A product wrapper turns that access into something a non-technical user can interact with.

Canva's Magic Studio is a good example. The underlying capability generating or editing images from text prompts comes from image generation models. But Canva wraps that capability in a design interface, applies brand guardrails, integrates it with the user's existing projects, and makes it feel like a natural extension of the product rather than a separate AI tool bolted on. That's the work of a wrapper: interface design, workflow integration, feature scoping, and brand alignment.

Wrappers also appear in B2B software. A CRM platform that drafts follow-up emails based on meeting notes is using an LLM under the hood, but the user sees a button in their existing workflow, not an AI interface.

The decision of when to build a wrapper versus integrate directly with an API depends on who your users are. If they're developers or technically fluent operators, direct API access may be sufficient. If they're end users who need simplicity, consistency, and a guided experience, a wrapper is usually the right layer to build.

Models answer questions. Agents do things.

An AI agent is a system that perceives its environment, makes decisions, and takes actions often in sequence, often without a human in the loop for each step. The model provides the reasoning capability. The agent architecture adds the ability to use tools, retain context across steps, and pursue a goal over multiple actions.

AutoGPT was an early demonstration of this: give the system a goal, and it would break it into tasks, search the web, write code, read files, and iterate until it either completed the goal or got stuck. It was rough around the edges, but it showed what becomes possible when you stop treating a model as a question-answering interface and start treating it as a reasoning engine that can act.

In production, AI agents are being used for tasks like monitoring systems and triggering alerts, processing documents and routing them based on content, and running multi-step research workflows. They typically combine a model with a set of defined tools, APIs, databases, code execution environments and a planning layer that sequences the actions.

The complexity of building a reliable agent is significantly higher than building an API integration. Memory management, error handling, action constraints, and safety boundaries all need to be thought through carefully.

Understanding these four layers; models, APIs, wrappers, agents changes how you approach architecture.

If you need AI capability in an existing product and the primary concern is speed to market, an API integration is almost always the right starting point. You're not maintaining a model, you're consuming one. The cost is predictable, the setup is relatively straightforward, and you can iterate quickly.

If your users need a polished experience and your product's value depends on ease of use, invest in the wrapper layer. That's where most of the product work actually lives, not in the model itself, but in how you surface its capability in a way that fits your users' workflow.

If you need a system that can complete multi-step tasks autonomously, or that needs to act on information rather than just respond to it, then an agent architecture is worth the additional complexity. But be realistic about the engineering effort and the reliability requirements. Agents that work well in demos don't always work well in production.

The cost dimension matters too. Direct model access, whether through fine-tuning or self-hosting, requires significant infrastructure investment. API consumption is metered but manageable. Agents, depending on how they're built, can make a lot of API calls per task, which adds up.

Future scalability is often underweighted at the design stage. A system built directly on a specific model's API is easy to build and easy to change. A system with a complex agentic architecture is more powerful but harder to modify, harder to debug, and more sensitive to changes in the underlying model's behaviour.

Models are the intelligence. APIs are the access layer. Wrappers make that access usable. Agents make it autonomous.

None of these is inherently better than the others. The right choice depends on what you're trying to build, who's going to use it, what your operational constraints are, and how much complexity you can absorb. What tends to go wrong in AI projects isn't a lack of ambition, it's architectural choices made before the team had a clear picture of what each layer actually does.

If you're at the stage of planning an AI integration or evaluating how to extend an existing product with AI capabilities, we're happy to talk through the options. No obligations just a conversation about what makes sense for your situation.