What a context window is, how it shapes what an AI system can and cannot do, and why it is one of the more important constraints to understand when building with language models.

When people talk about the capabilities of different AI models, they often focus on benchmarks, how well a model scores on reasoning tests, coding tasks, or language understanding evaluations. These are useful, but they miss one of the most practically significant variables: the context window. It determines what the model can see at any given moment, and by extension, what it can do.

Understanding context windows is not a technical nicety. It is a foundational constraint that shapes how you design systems, what trade-offs you make, and where things are likely to go wrong.

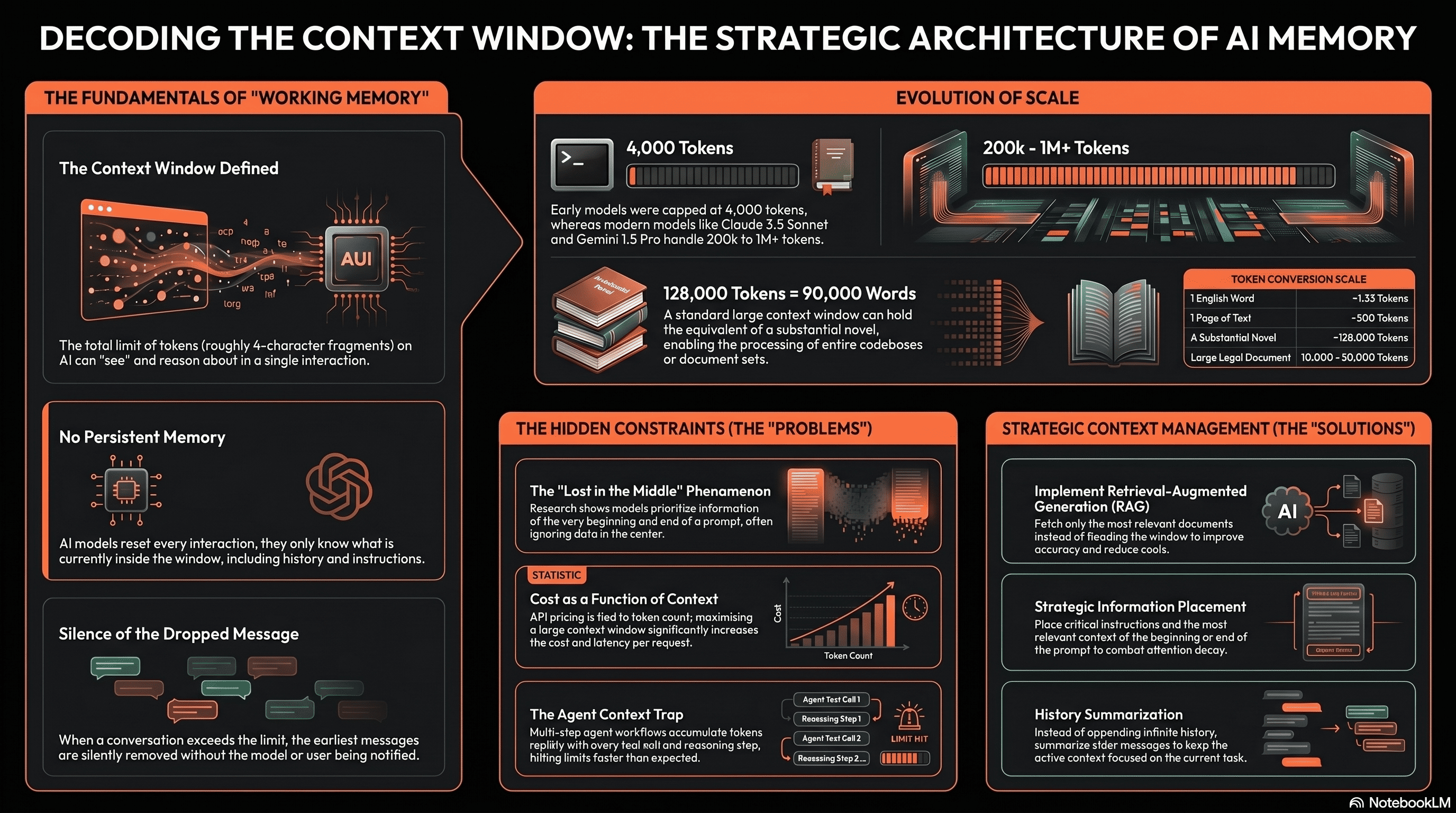

A language model does not have persistent memory. Every time you interact with it, it starts from scratch. The context window is the total amount of text measured in tokens, which are roughly word fragments that the model can process in a single interaction. This includes everything: the system instructions, the conversation history, any documents you have provided, and the model’s own responses so far.

Think of it as the model’s working memory. It can only reason about, reference, or respond to what is currently inside that window. Anything outside it does not exist as far as the model is concerned. A conversation that has grown longer than the context window will have its earliest messages silently dropped and the model will have no awareness that this has happened.

A rough guide: one token is approximately four characters of English text. A 128,000 token context window holds roughly 90,000 to 100,000 words about the length of a substantial novel. That sounds large until you are building a system that needs to process hundreds of documents simultaneously.

Early commercial language models had context windows of around 4,000 tokens. This was enough for a short conversation or a brief document, but it imposed significant constraints on what you could build. Systems required complex workarounds to handle longer inputs: chunking documents, summarising conversation history, and managing which information to keep in context at any given moment.

The context windows available now are orders of magnitude larger. Claude 3.5 Sonnet supports 200,000 tokens. Gemini 1.5 Pro has been tested at one million tokens. This expansion has changed what is architecturally possible you can now pass in an entire codebase, a long legal document, or an extended conversation history without hitting the limit in most use cases.

But larger context windows do not eliminate the constraints. They shift them. The practical implications are more nuanced than simply ‘more is better’.

For most straightforward applications; a chatbot, a summarisation tool, a document Q&A system handling normal-length documents context window size is rarely the binding constraint today. Current models provide enough room to work with.

Where it becomes significant is in more complex architectures. Multi-step agent workflows accumulate context quickly. Each tool call, each intermediate result, each step of reasoning adds to the total. A long-running agent that has performed twenty steps, retrieved several documents, and maintained a conversation history can hit limits faster than you might expect.

The same applies to applications that need to process very large inputs simultaneously: comparing multiple long contracts, analysing an entire codebase for a specific pattern, or synthesising a large set of research documents in one pass. Here, context window size becomes a genuine architectural decision, not just a spec to note.

Cost is also a direct function of context. Most API pricing is based on input and output tokens. A larger context window used fully means a higher cost per request. In high-volume applications, this adds up quickly and needs to be accounted for in system design.

Having a large context window does not mean the model uses all of it equally well. Research has shown that language models tend to pay more attention to information at the beginning and end of a context window than to information in the middle. This is sometimes called the ‘lost in the middle’ problem, and it has real consequences for how you structure inputs.

If you are building a system that retrieves multiple documents and passes them to a model, where you place the most relevant information in the prompt matters. The critical context belongs near the beginning or the end, not buried in the middle of a long document dump. This is not intuitive, and it is one of the reasons that simply increasing the context window does not automatically improve output quality.

It also means that in very long conversations, important instructions or constraints stated early may receive less weight as the conversation grows. For agent systems and long-running workflows, this needs to be managed explicitly — restating key instructions periodically, or restructuring the context to keep critical information prominent.

For teams building seriously on top of language models, context management is a design discipline in its own right. Deciding what to include in the context, in what order, and at what level of detail is not a trivial question. It affects quality, cost, latency, and reliability.

This is one reason why retrieval-augmented generation fetching only the most relevant documents for a given query rather than passing everything at once remains a strong pattern even as context windows grow. Selective retrieval produces better results than flooding the model with loosely relevant information. It is also cheaper and faster.

The same principle applies to conversation history management. Rather than appending every message indefinitely, well-designed systems summarise older history, maintain a structured memory of key facts, and keep the active context focused on what is actually relevant to the current task.

Context window size is one of the more important variables to understand when choosing a model or designing an AI system. It is not the only variable, and for many use cases it will not be the limiting factor. But it shapes what is possible, what things cost, and where systems tend to fail in non-obvious ways.

The teams that build reliable AI systems understand these constraints and design around them deliberately. They do not assume that a larger context window solves the problem. They think carefully about what goes into the context, how it is structured, and what happens as the context grows over time.

If you are thinking through the architecture of an AI system, we are happy to have that conversation