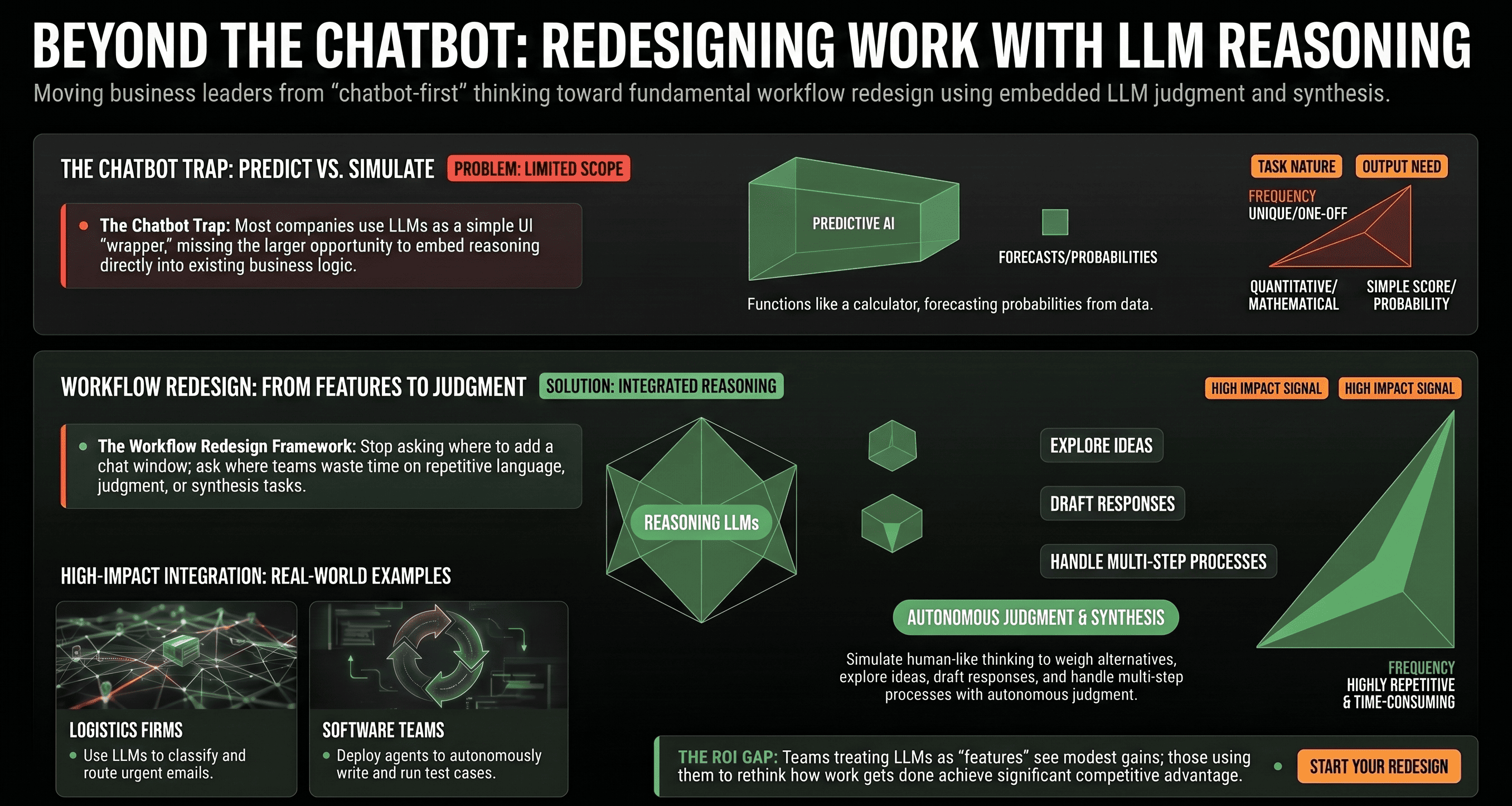

There's an important distinction worth making before we move further: traditional AI predicts, while LLMs simulate.

It might sound like a small difference, but it completely changes how you should think about and use them.

Traditional predictive AI has been around for years. It looks at historical data, finds patterns, and forecasts an outcome. A churn model tells you which customers are likely to leave. A recommendation engine guesses what a user might click on next. It gives you a specific answer, usually in the form of a probability. Very useful, but narrow in scope.

LLMs work differently. Instead of spitting out a probability, they reason through a problem. They explore ideas, weigh alternatives, draft responses, and produce something that actually feels like thinking, not just scoring. If traditional AI is a calculator, an LLM is more like a smart collaborator. This shift fundamentally changes what you should build with them.

When most companies first start playing with LLMs, their immediate instinct is to build a chatbot. Slap it on top of the product, connect it to the documentation, and call it an "AI assistant." That's not always a bad idea, there are cases where a conversational interface makes sense. But it often misses the much bigger opportunity.

A chatbot is just a wrapper. What LLMs actually unlock is the ability to embed reasoning directly into your workflows. They can draft content, review work, make structured decisions, and even handle multi-step processes with a surprising degree of autonomy. The companies getting the most value from LLMs aren't asking, "Where can we add a chat interface?" They're asking: "Where is our team currently wasting time on tasks that involve language, judgment, or synthesis?" Those are the real opportunities.

A logistics company uses an LLM to automatically classify incoming emails by urgency, extract important details, and route them to the right team without any human touching them until it actually matters. A financial firm uses it to generate first-draft summaries of long regulatory documents, dramatically cutting down review time. A software team has an LLM-powered agent that writes and runs basic test cases, freeing up engineers to focus on more complex work.

None of these examples are chatbots. They're all workflow redesigns.

The difference in outcomes tends to come down to how teams frame the problem:

When evaluating LLM opportunities in your own company or product, score potential use cases based on how much language, judgment, or synthesis is involved; how repetitive or time-consuming the task currently is; how much structure the output needs; and how much human oversight is acceptable. The higher these factors, the bigger the potential impact.

Before you rush into any LLM integration, ask yourself this: "What would this process look like if a highly capable human assistant could automatically handle all the language-heavy, repetitive, or synthesis parts?" Start there. Map out the current workflow. Identify where language, judgment, or structured output is the bottleneck. Then design the solution around that, not around slapping a chat window on top.

LLMs are a powerful tool for redesigning how work actually gets done. Teams that treat them as just another feature to add will usually see modest improvements. The teams that use them to fundamentally rethink their workflows are the ones seeing truly meaningful gains.

LLMs are not just smarter search engines or fancy chatbots. They are the first technology that can simulate human-like reasoning at scale for language-based work. The companies that will win with LLMs are those that stop thinking in terms of "AI features" and start thinking in terms of process transformation.

If you're trying to figure out where LLMs can create real impact in your product or operations or where LLMs actually fit in your workflow, we'd be happy to explore it with you. Just reach out here.